Aurich Lawson

Friday afternoon, the OpenZFS project released version 2.1.0 of our perennial favorite “it’s complicated but it’s worth it” filesystem. The new release is compatible with FreeBSD 12.2-RELEASE and up, and Linux kernels 3.10-5.13. This release offers several general performance improvements, as well as a few entirely new features—mostly targeting enterprise and other extremely advanced use cases.

Today, we’re going to focus on arguably the biggest feature OpenZFS 2.1.0 adds—the dRAID vdev topology. dRAID has been under active development since at least 2015, and reached beta status when merged into OpenZFS master in November 2020. Since then, it’s been heavily tested in several major OpenZFS development shops—meaning today’s release is “new” to production status, not “new” as in untested.

Distributed RAID (dRAID) overview

If you already thought ZFS topology was a complex topic, get ready to have your mind blown. Distributed RAID (dRAID) is an entirely new vdev topology we first encountered in a presentation at the 2016 OpenZFS Dev Summit.

When creating a dRAID vdev, the admin specifies a number of data, parity, and hotspare sectors per stripe. These numbers are independent of the number of actual disks in the vdev. We can see this in action in the following example, lifted from the dRAID Basic Concepts documentation:

root@box:~# zpool create mypool draid2:4d:1s:11c wwn-0 wwn-1 wwn-2 ... wwn-A

root@box:~# zpool status mypool

pool: mypool

state: ONLINE

config:

NAME STATE READ WRITE CKSUM

tank ONLINE 0 0 0

draid2:4d:11c:1s-0 ONLINE 0 0 0

wwn-0 ONLINE 0 0 0

wwn-1 ONLINE 0 0 0

wwn-2 ONLINE 0 0 0

wwn-3 ONLINE 0 0 0

wwn-4 ONLINE 0 0 0

wwn-5 ONLINE 0 0 0

wwn-6 ONLINE 0 0 0

wwn-7 ONLINE 0 0 0

wwn-8 ONLINE 0 0 0

wwn-9 ONLINE 0 0 0

wwn-A ONLINE 0 0 0

spares

draid2-0-0 AVAILdRAID topology

In the above example, we have eleven disks: wwn-0 through wwn-A. We created a single dRAID vdev with 2 parity devices, 4 data devices, and 1 spare device per stripe—in condensed jargon, a draid2:4:1.

Even though we have eleven total disks in the draid2:4:1, only six are used in each data stripe—and one in each physical stripe. In a world of perfect vacuums, frictionless surfaces, and spherical chickens the on-disk layout of a draid2:4:1 would look something like this:

| 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | A |

| s | P | P | D | D | D | D | P | P | D | D |

| D | s | D | P | P | D | D | D | D | P | P |

| D | D | s | D | D | P | P | D | D | D | D |

| P | P | D | s | D | D | D | P | P | D | D |

| D | D | . | . | s | . | . | . | . | . | . |

| . | . | . | . | . | s | . | . | . | . | . |

| . | . | . | . | . | . | s | . | . | . | . |

| . | . | . | . | . | . | . | s | . | . | . |

| . | . | . | . | . | . | . | . | s | . | . |

| . | . | . | . | . | . | . | . | . | s | . |

| . | . | . | . | . | . | . | . | . | . | s |

Effectively, dRAID is taking the concept of “diagonal parity” RAID one step farther. The first parity RAID topology wasn’t RAID5—it was RAID3, in which parity was on a fixed drive, rather than being distributed throughout the array.

RAID5 did away with the fixed parity drive, and distributed parity throughout all of the array’s disks instead—which offered significantly faster random write operations than the conceptually simpler RAID3, since it didn’t bottleneck every write on a fixed parity disk.

dRAID takes this concept—distributing parity across all disks, rather than lumping it all onto one or two fixed disks—and extends it to spares. If a disk fails in a dRAID vdev, the parity and data sectors which lived on the dead disk are copied to the reserved spare sector(s) for each affected stripe.

Let’s take the simplified diagram above, and examine what happens if we fail a disk out of the array. The initial failure leaves holes in most of the data groups (in this simplified diagram, stripes):

| 0 | 1 | 2 | 4 | 5 | 6 | 7 | 8 | 9 | A | |

| s | P | P | D | D | D | P | P | D | D | |

| D | s | D | P | D | D | D | D | P | P | |

| D | D | s | D | P | P | D | D | D | D | |

| P | P | D | D | D | D | P | P | D | D | |

| D | D | . | s | . | . | . | . | . | . |

But when we resilver, we do so onto the previously reserved spare capacity:

| 0 | 1 | 2 | 4 | 5 | 6 | 7 | 8 | 9 | A | |

| D | P | P | D | D | D | P | P | D | D | |

| D | P | D | P | D | D | D | D | P | P | |

| D | D | D | D | P | P | D | D | D | D | |

| P | P | D | D | D | D | P | P | D | D | |

| D | D | . | s | . | . | . | . | . | . |

Please note that these diagrams are simplified. The full picture involves groups, slices, and rows, which we aren’t going to try to get into here. The logical layout is also randomly permutated to distribute things more evenly over the drives based on the offset. Those interested in the hairiest details are encouraged to look at this detailed comment in the original code commit.

It’s also worth noting that dRAID requires fixed stripe widths—not the dynamic widths supported by traditional RAIDz1 and RAIDz2 vdevs. If we’re using 4kn disks, a draid2:4:1 vdev like the one shown above will require 24KiB on-disk for every metadata block, where a traditional six-wide RAIDz2 vdev would only need 12KiB. This discrepancy gets worse the higher the values of d+p get—a draid2:8:1 would require a whopping 40KiB for the same metadata block!

For this reason, the special allocation vdev is very useful in pools with dRAID vdevs—when a pool with draid2:8:1 and a three-wide special needs to store a 4KiB metadata block, it does so in only 12KiB on the special, instead of 40KiB on the draid2:8:1.

dRAID performance, fault tolerance, and recovery

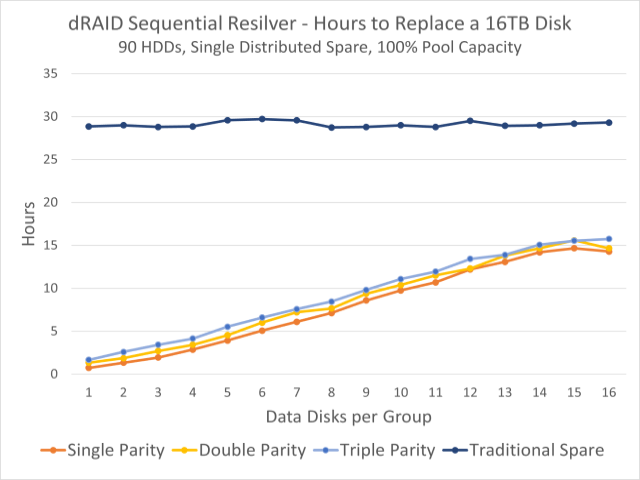

This graph shows observed resilvering times for a 90-disk pool. The dark blue line at the top is the time to resilver onto a fixed hotspare disk; the colorful lines beneath demonstrate times to resilver onto distributed spare capacity.

For the most part, a dRAID vdev will perform similarly to an equivalent group of traditional vdevs—for example, a draid1:2:0 on nine disks will perform near-equivalently to a pool of three 3-wide RAIDz1 vdevs. Fault tolerance is also similar—you’re guaranteed to survive a single failure with p=1, just as you are with the RAIDz1 vdevs.

Notice that we said fault tolerance is similar, not identical. A traditional pool of three 3-wide RAIDz1 vdevs is only guaranteed to survive a single disk failure, but will probably survive a second—as long as the second disk to fail isn’t part of the same vdev as the first, everything’s fine.

In a nine-disk draid1:2, a second disk failure will almost certainly kill the vdev (and the pool along with it), if that failure happens prior to resilvering. Since there are no fixed groups for individual stripes, a second disk failure is very likely to knock out additional sectors in already-degraded stripes, no matter which disk fails second.

That somewhat-decreased fault tolerance is compensated for with drastically faster resilver times. In the chart at the top of this section, we can see that in a pool of ninety 16TB disks, resilvering onto a traditional, fixed spare takes roughly thirty hours no matter how we’ve configured the dRAID vdev—but resilvering onto distributed spare capacity can take as little as one hour.

This is largely because resilvering onto a distributed spare splits the write load up amongst all of the surviving disks. When resilvering onto a traditional spare, the spare disk itself is the bottleneck—reads come from all disks in the vdev, but writes must all be completed by the spare. But when resilvering to distributed spare capacity, both read and write workloads are divvied up among all surviving disks.

The distributed resilver can also be a sequential resilver, rather than a healing resilver—meaning that ZFS can simply copy over all affected sectors, without worrying about what blocks those sectors belong to. Healing resilvers, by contrast, must scan the entire block tree—resulting in a random read workload, rather than a sequential read workload.

When a physical replacement for the failed disk is added to the pool, that resilver operation will be healing, not sequential—and it will bottleneck on the write performance of the single replacement disk, rather than of the entire vdev. But the time to complete that operation is far less crucial, since the vdev is not in a degraded state to begin with.

Conclusions

Distributed RAID vdevs are mostly intended for large storage servers—OpenZFS draid design and testing revolved largely around 90-disk systems. At smaller scale, traditional vdevs and spares remain as useful as they ever were.

We especially caution storage newbies to be careful with draid—it’s a significantly more complex layout than a pool with traditional vdevs. The fast resilvering is fantastic—but draid takes a hit in both compression levels and some performance scenarios due to its necessarily fixed-length stripes.

As conventional disks continue to get larger without significant performance increases, draid and its fast resilvering may become desirable even on smaller systems—but it’ll take some time to figure out exactly where the sweet spot begins. In the meantime, please remember that RAID is not a backup—and that includes draid!